Context Window

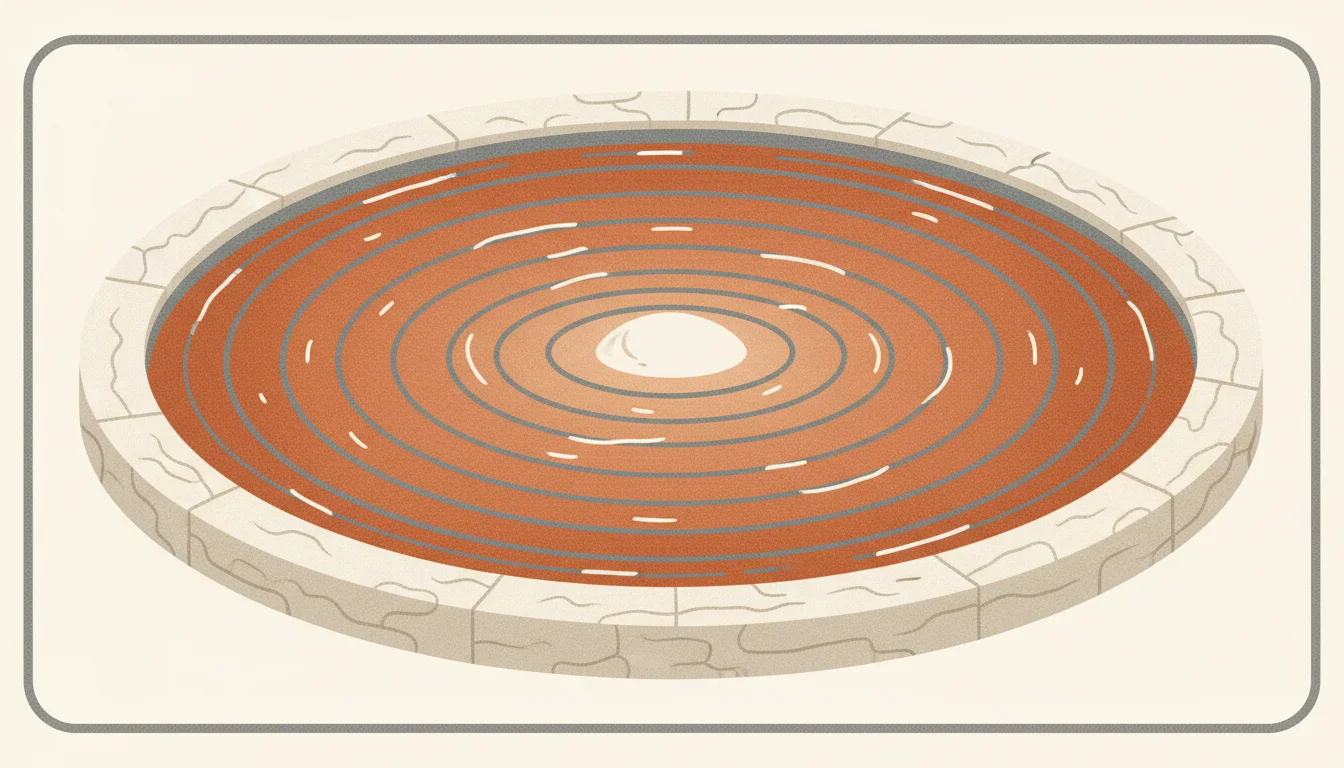

The context window is the maximum number of tokens a language model can process in a single request, including both the input prompt and the generated output. It defines how much information the model can consider at once when generating a response.

How It Works

Think of the context window as the model's working memory. Everything the model needs to know for a given request has to fit inside this window: the system prompt, conversation history, retrieved documents, user question, and the space for the response.

Context window sizes have grown fast. GPT-3 had a 4K-token window. GPT-4 offered 128K. Claude 3.5 Sonnet and Claude 4 models now support 200K tokens, with Claude reaching 1M in enterprise previews. Google's Gemini 1.5 Pro also supports 1M tokens, and Gemini 2.0 Flash goes to 2M. Larger windows mean you can include more context. They don't come free.

The economics matter. Attention is O(n squared) in sequence length. Cost and latency grow fast as you stuff more tokens in. Sending 100K tokens of context when 10K would have been enough wastes money and slows responses. Prompt caching (supported by Anthropic and OpenAI) helps by pricing repeat prefixes at a deep discount, but it only helps when your prefix is actually stable across requests.

For enterprise applications, context window size affects architecture decisions. With a small window, you need aggressive chunking and precise retrieval to fit the most relevant information. With a large window, you can include more documents, but you also pay more per request and may still lose accuracy to the lost-in-the-middle effect, where models pay more attention to information at the start and end of the context than to the middle.

There's also a real quality ceiling. Benchmarks like RULER and Needle-in-a-Haystack show that effective context often ends before nominal context. A model advertised at 200K tokens may only reliably retrieve details from the first and last 50K. This is improving, but it's why RAG still matters even in long-context models: feeding the right 5K tokens beats dumping 200K and hoping the model finds the relevant sentence.

In practice, most enterprise AI applications use effective contexts between 4K and 32K tokens per request. Long-context patterns shine for document analysis, codebase understanding, and multi-turn conversations where the history itself is the context worth carrying.

In Practice

As of 2026, common context windows are: Claude 3.5 Sonnet and Claude 4 at 200K standard and 1M in enterprise tier, GPT-4o at 128K, GPT-4.1 at 1M, Gemini 1.5 Pro and 2.0 at 1M-2M, and open models like Llama 3.1 70B at 128K. Prompt caching on Anthropic discounts cached prefix tokens to about 10% of base cost and has a 5-minute TTL; OpenAI offers similar caching on the Responses API.

Typical allocation inside a 32K window for a RAG system: system prompt 500 tokens, retrieved chunks 20,000 tokens, conversation history 6,000 tokens, user question 500 tokens, reserved for response 5,000 tokens. For agents, the history can grow fast across tool calls, so teams either truncate older turns, summarize them with a separate LLM call, or move them to long-term memory in a vector store.

A working pattern for long-context workloads: cache the large reference material (product catalog, legal corpus, codebase) as a stable prefix via Anthropic prompt caching, then append only the per-request variables (user query, recent history). On Anthropic's API that's done by marking cache_control breakpoints in the messages array. For Gemini's 1M-token workloads, the Context Caching API works similarly but is billed by cache duration.

Worked Example

A financial analyst at a mid-sized asset manager uses an internal research assistant to answer questions about earnings calls. Each earnings transcript runs about 12,000 tokens. The firm tracks 400 portfolio companies, so the full corpus is roughly 4.8M tokens, nowhere close to fitting in a single context window.

The first architecture loads all relevant transcripts for a target ticker into Claude Sonnet's 200K window directly: last 4 quarters, roughly 48K tokens. Plus system prompt, conversation history, and question. Total per request: around 55K tokens. Cost per query: roughly 17 cents at 2026 rates, and latency around 4 seconds. Attention quality is good but the cost adds up fast across 50 analysts asking dozens of questions each day.

The team switches to a hybrid pattern. They cache a stable 48K-token bundle (4 quarters of transcripts for the user's focus ticker) using Anthropic prompt caching, which drops repeat queries to about 10% of the original token cost after the first call. Follow-up questions in the same session reuse the cache. Effective cost per query drops from 17 cents to 3 cents on cached requests, latency drops to around 1.5 seconds, and the analyst gets the same answer quality. Monthly spend on the assistant falls by about 70% with no quality hit.

What People Get Wrong

Myth

A bigger context window always improves answer quality.

Reality

Up to a point. Beyond the model's effective attention range, adding tokens dilutes rather than helps. The lost-in-the-middle effect means details in the middle of a huge context get attended to less than details at the start or end. RULER and similar benchmarks show effective context is often smaller than advertised. More tokens also means more cost and more latency with no quality gain.

Myth

Long context windows eliminate the need for RAG.

Reality

You can stuff 1M tokens into Gemini 1.5, but you pay for every token on every call, latency balloons, and the model still misses details in the middle. RAG stays useful because it selects the right 5-10K tokens instead of the whole corpus. The combination works well: long context for the conversation and reasoning, RAG for precise document lookup.

Myth

Conversation history is free context.

Reality

Every turn adds tokens, and tokens cost money per request. A 20-turn chat with 500-token messages each way is 20,000 tokens of history before you add the system prompt or retrieved context. Long conversations need summarization, selective retention, or long-term memory in a vector store. Systems that just keep appending will hit the context limit and the cost ceiling together.

Related Reading

Need help implementing this?

We build production AI systems for enterprises. Tell us what you are working on and we will scope it in 30 minutes.